Google Cloud Storage

Overview

The Google Cloud Storage (GCS) connector provides a convenient way of importing event data directly from a GCS bucket into the Decentriq DMP.

Prerequisites

- Have a Google Cloud account.

- Have an existing Google Cloud Storage bucket containing the data to import.

- Have existing service account credentials.

Step-by-step guide

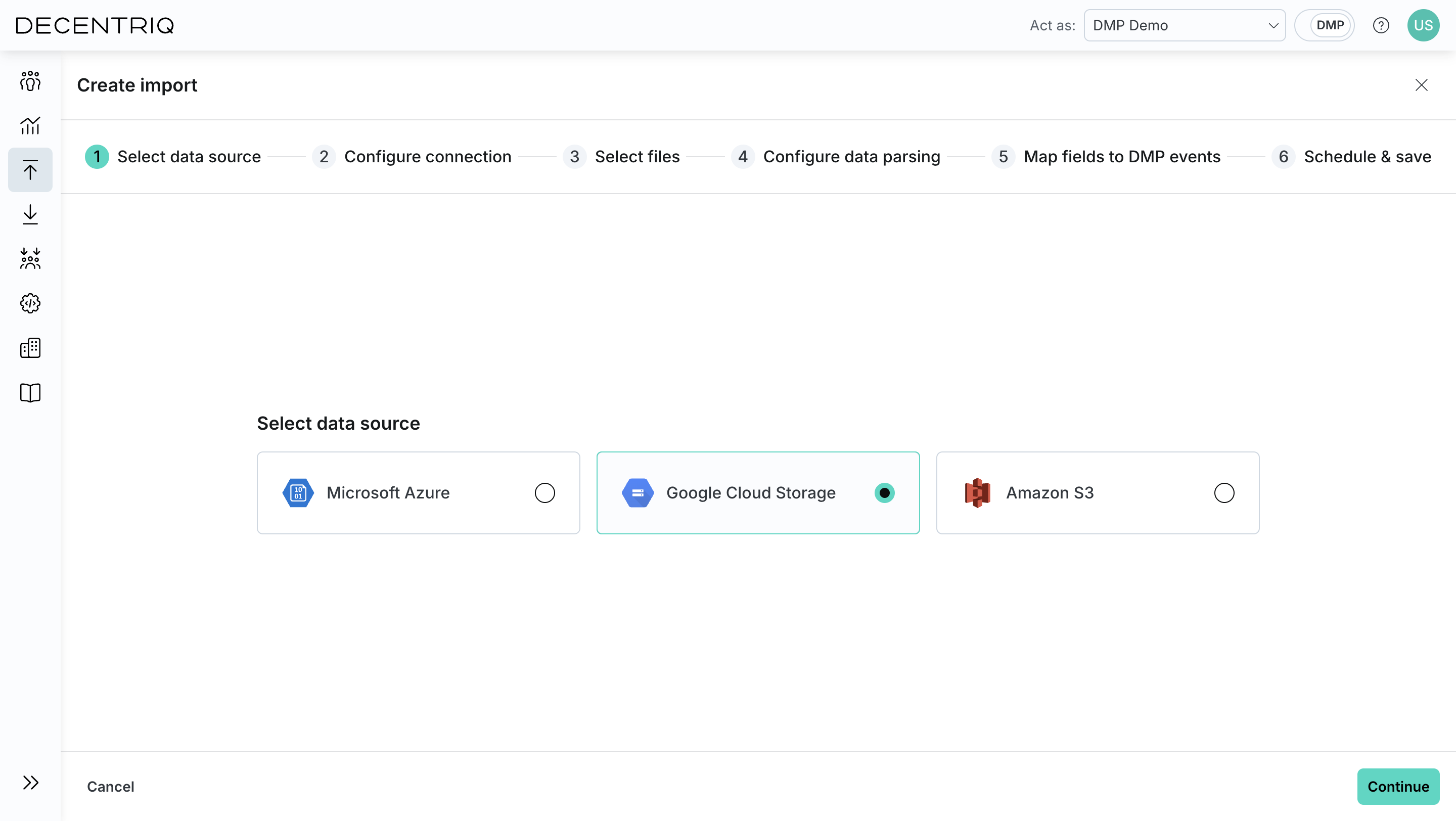

Step 1: Select the source

Follow the steps to create a new import and choose Google Cloud Storage from the list of available data sources.

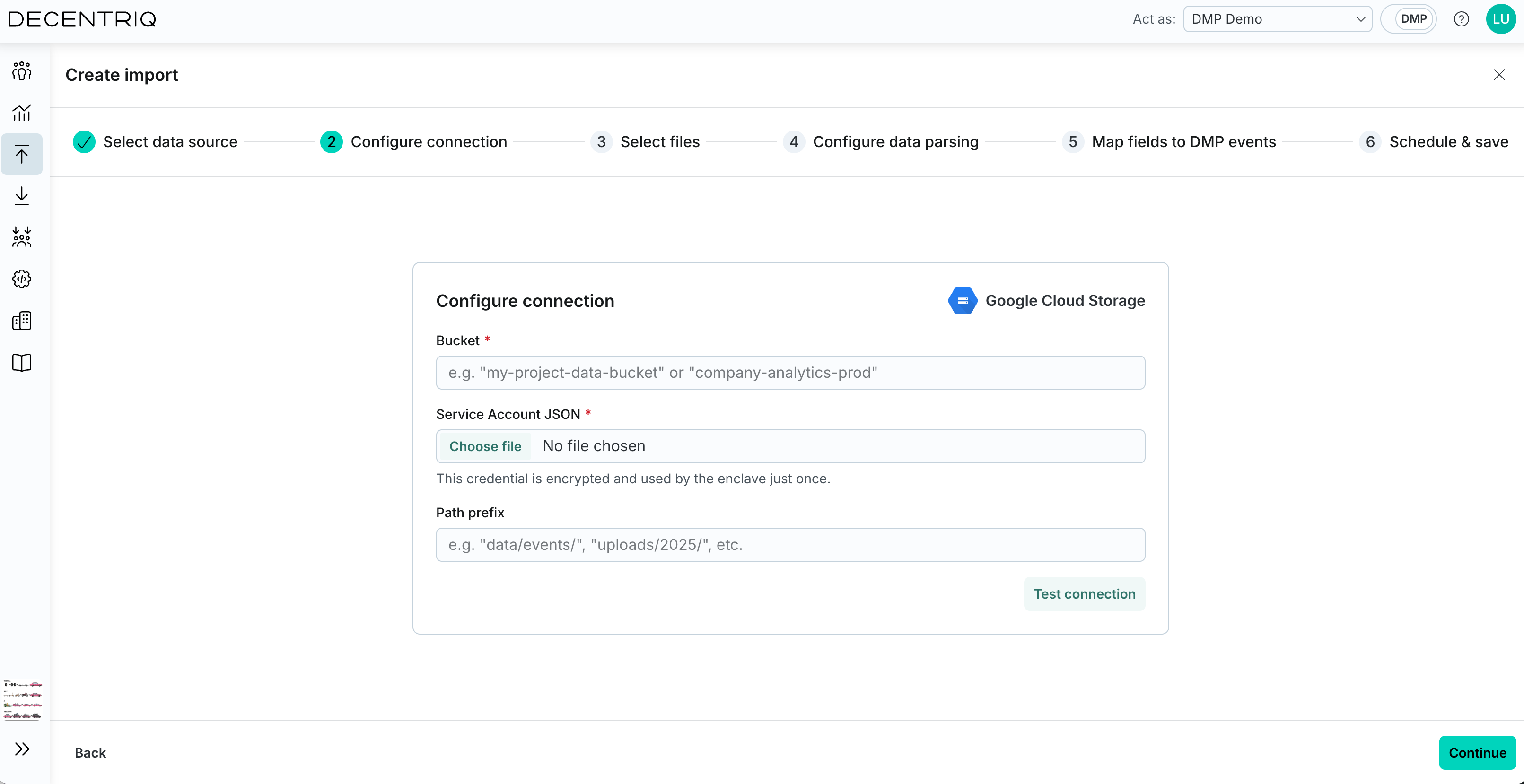

Step 2: Configure connection

Input the required information:

- Bucket: Name of the GCS bucket from which the data should be imported.

- Service Account JSON: The service account credentials associated with the Google Cloud account (see the Google documentation for more details). This takes the form of a JSON file generated when setting up the service account and contains the following information:

- type: Identifies the type of credentials (this will be set to service_account).

- project_id: The ID of your Google Cloud project.

- private_key_id: The identifier for the private key.

- private_key: The actual private key used for authentication - in PEM format.

- client_email: The email address of the service account.

- client_id: A unique identifier for the service account.

- auth_uri: The URL to initiate OAuth2 authentication requests.

- token_uri: The URL to retrieve OAuth2 tokens.

- auth_provider_x509_cert_url: The URL to get Google's public certificates for verifying signatures.

- client_x509_cert_url: The URL to the public certificate for the service account.

- universe_domain: The domain of the API endpoint.

- Path prefix: An optional prefix used to limit the scope of retrieved objects to a specific folder or directory within the bucket.

Click Test connection to verify that the credentials and configuration are valid.

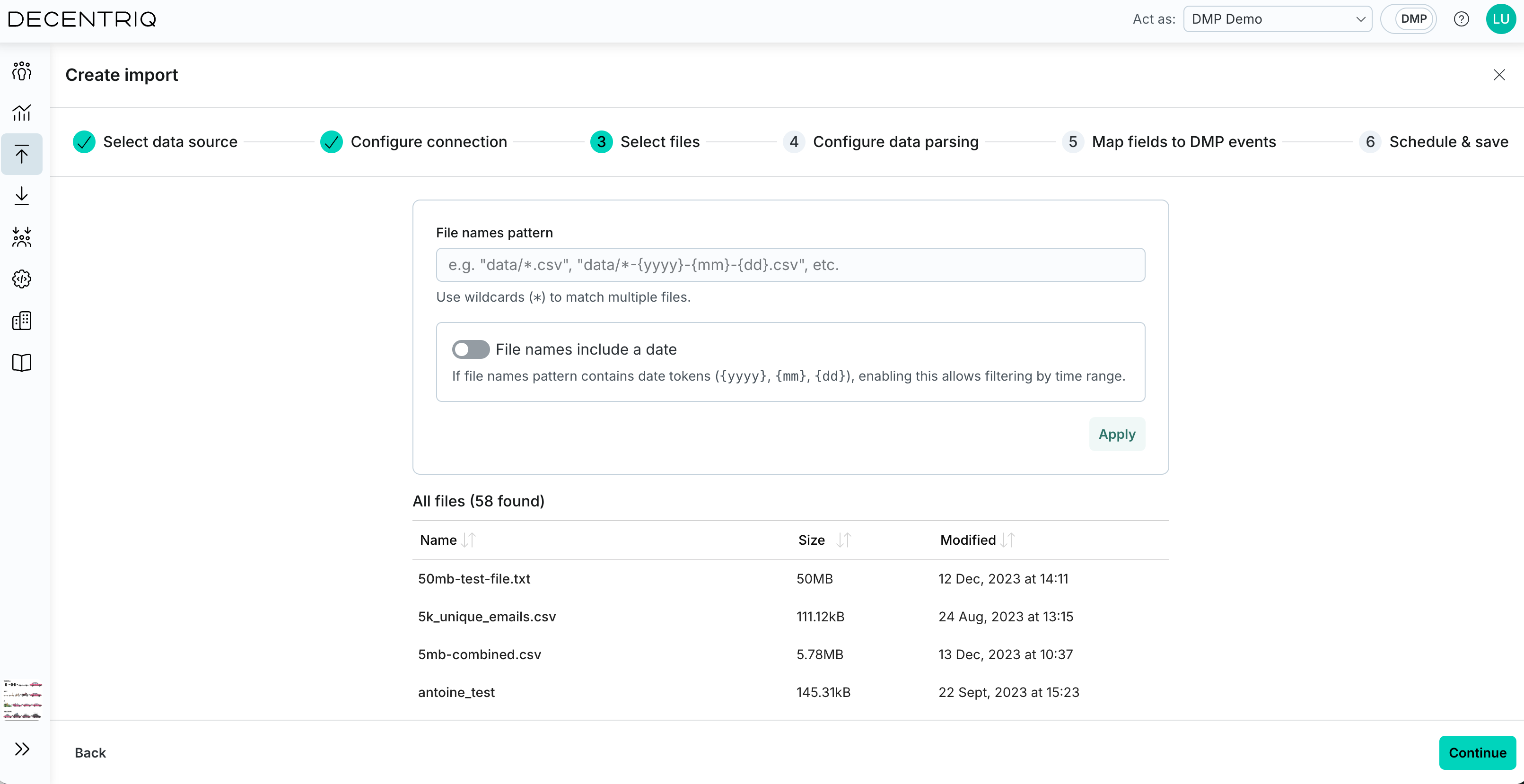

Step 3: Select files

Click Continue to proceed to file selection. Configure which files should be imported:

- File names pattern: Define a path and file name pattern to match files (for example data/*.csv or data/*-{yyyy}-{mm}-{dd}.csv). Wildcards (*) are supported.

- File names include a date (optional): Enable this if the pattern contains date tokens ({yyyy}, {mm}, {dd}), then select an absolute or relative time range.

- Click Apply to update the list of matching files. The table displays all files that match the configured pattern and time range.

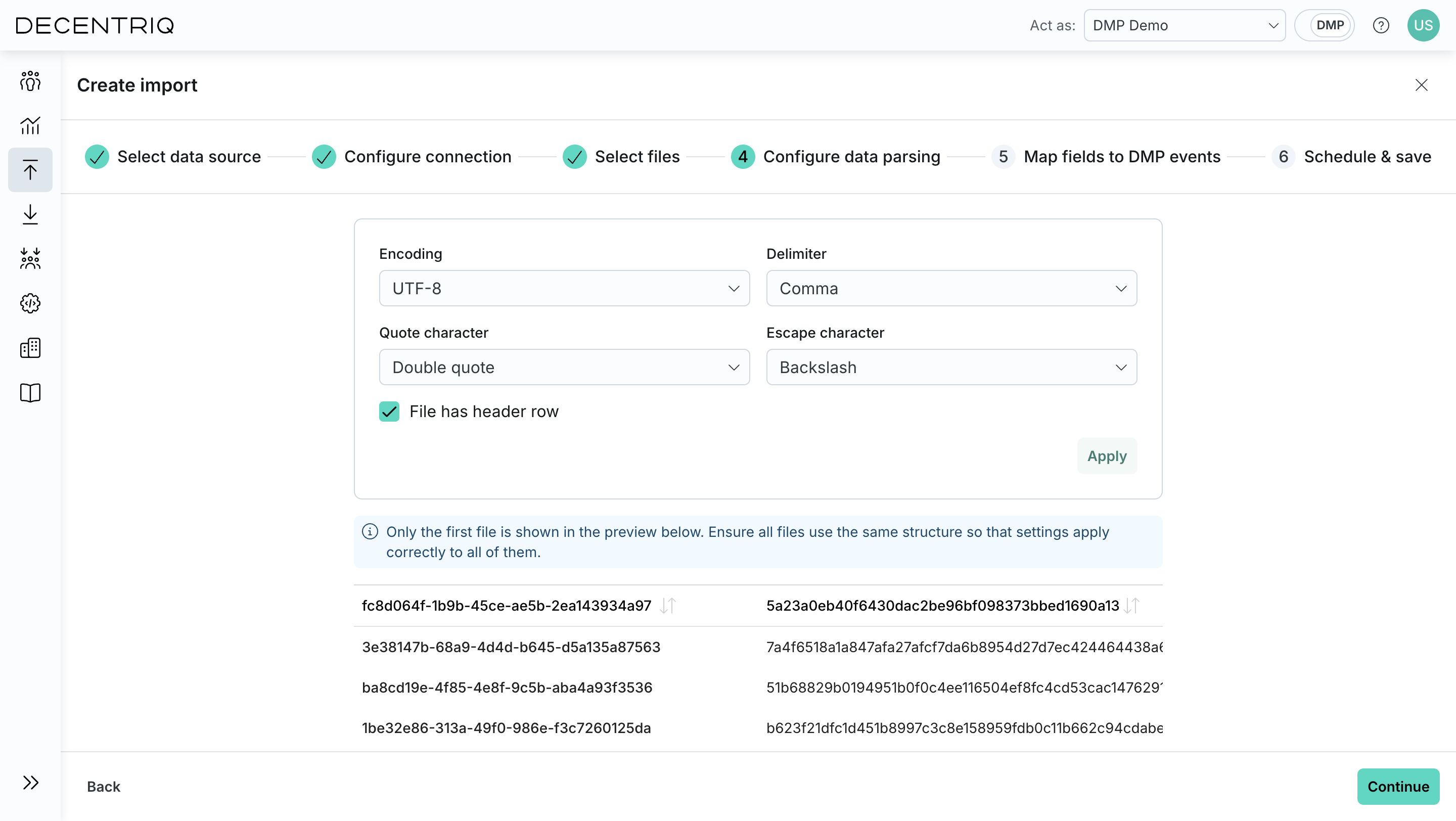

Step 4: Configure parsing

Click Continue to proceed to data parsing. Define how the selected files should be parsed, including format-specific options such as delimiters, headers, or timestamp handling.

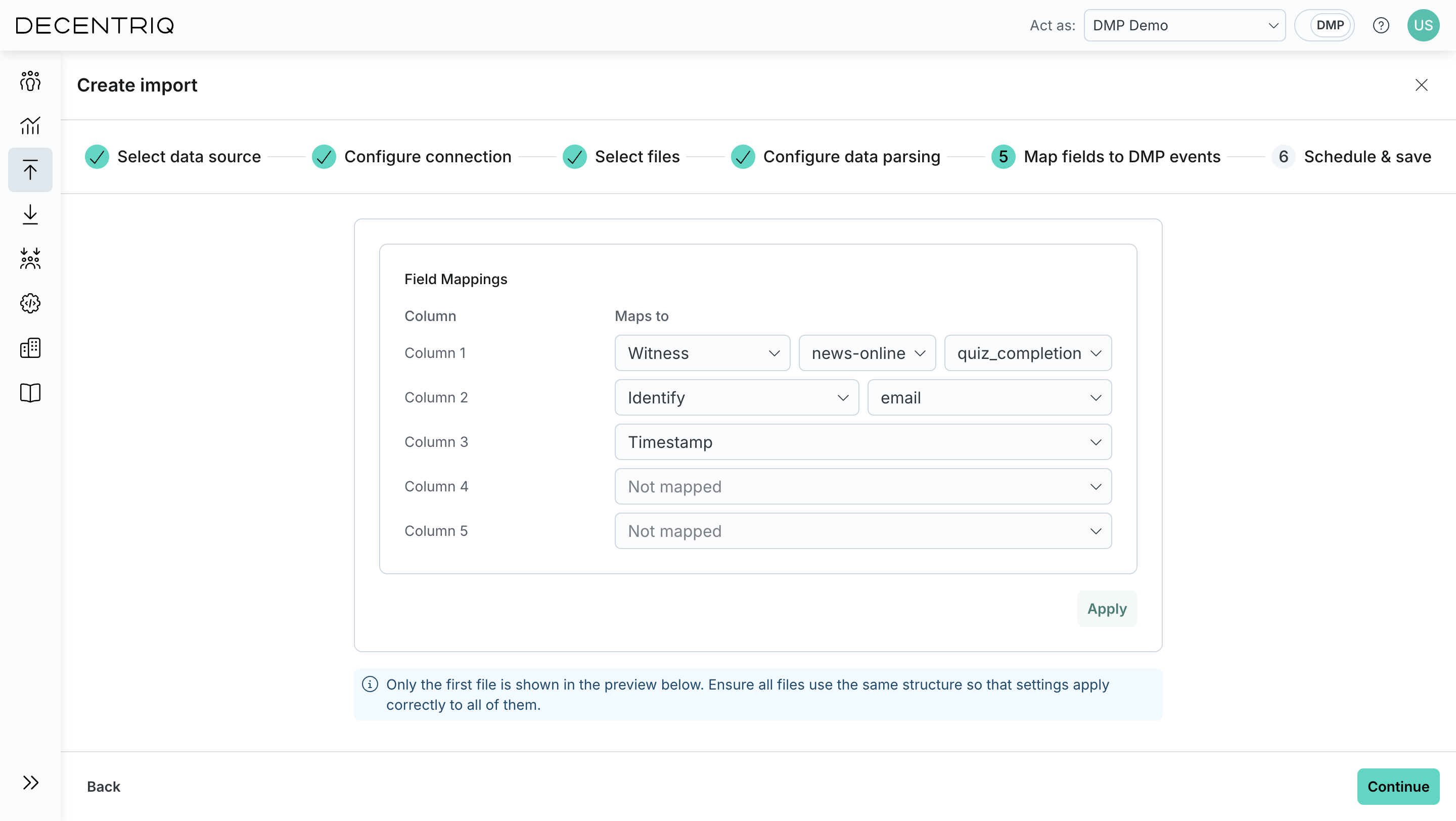

Step 5: Map fields

Click Continue to proceed to field mapping. Map the parsed fields to DMP event attributes. This step defines how incoming data is transformed into DMP events and user profile updates.

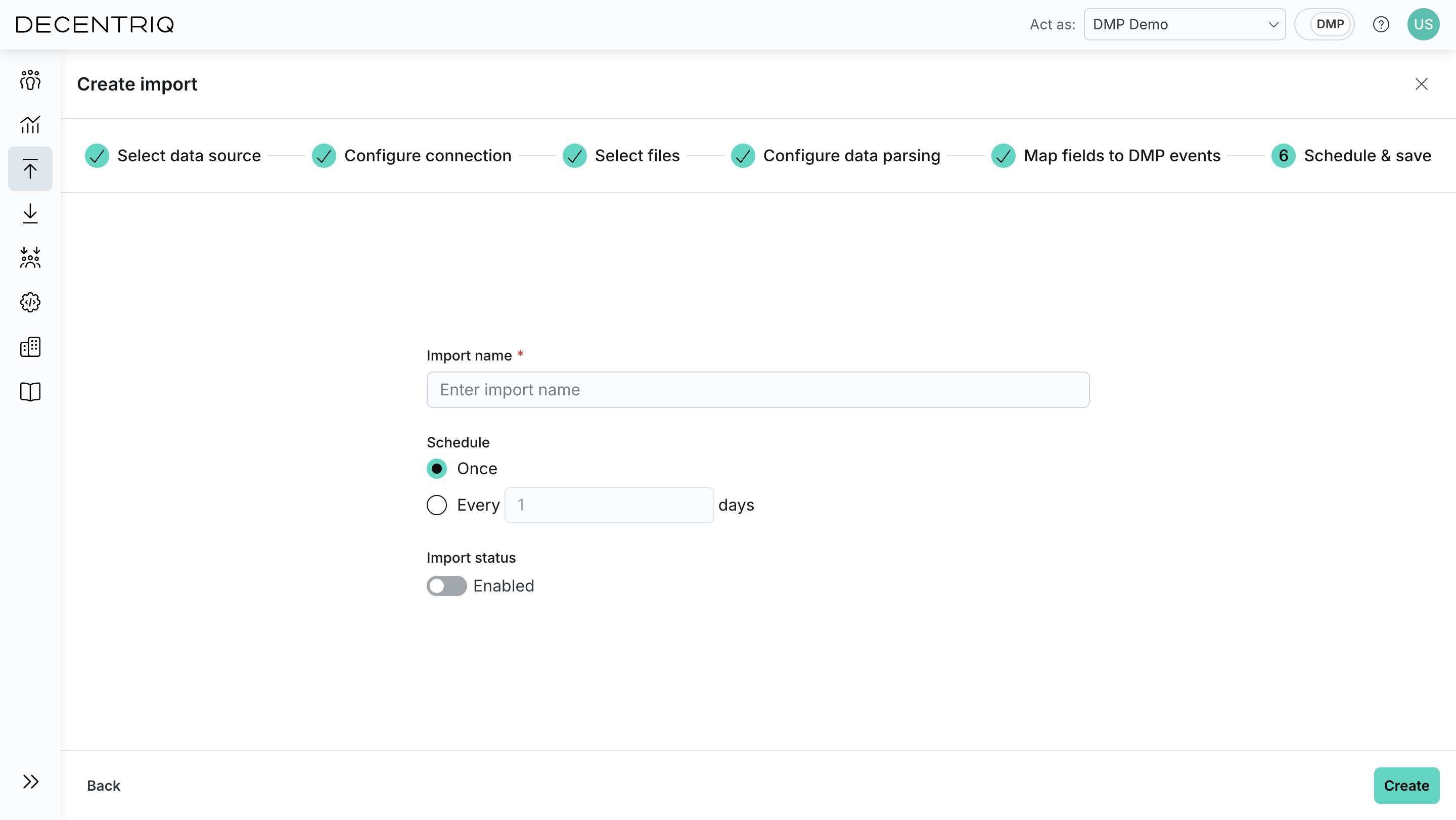

Step 6: Schedule and name

Click Continue to proceed to scheduling. Name the import and define how often it should run.

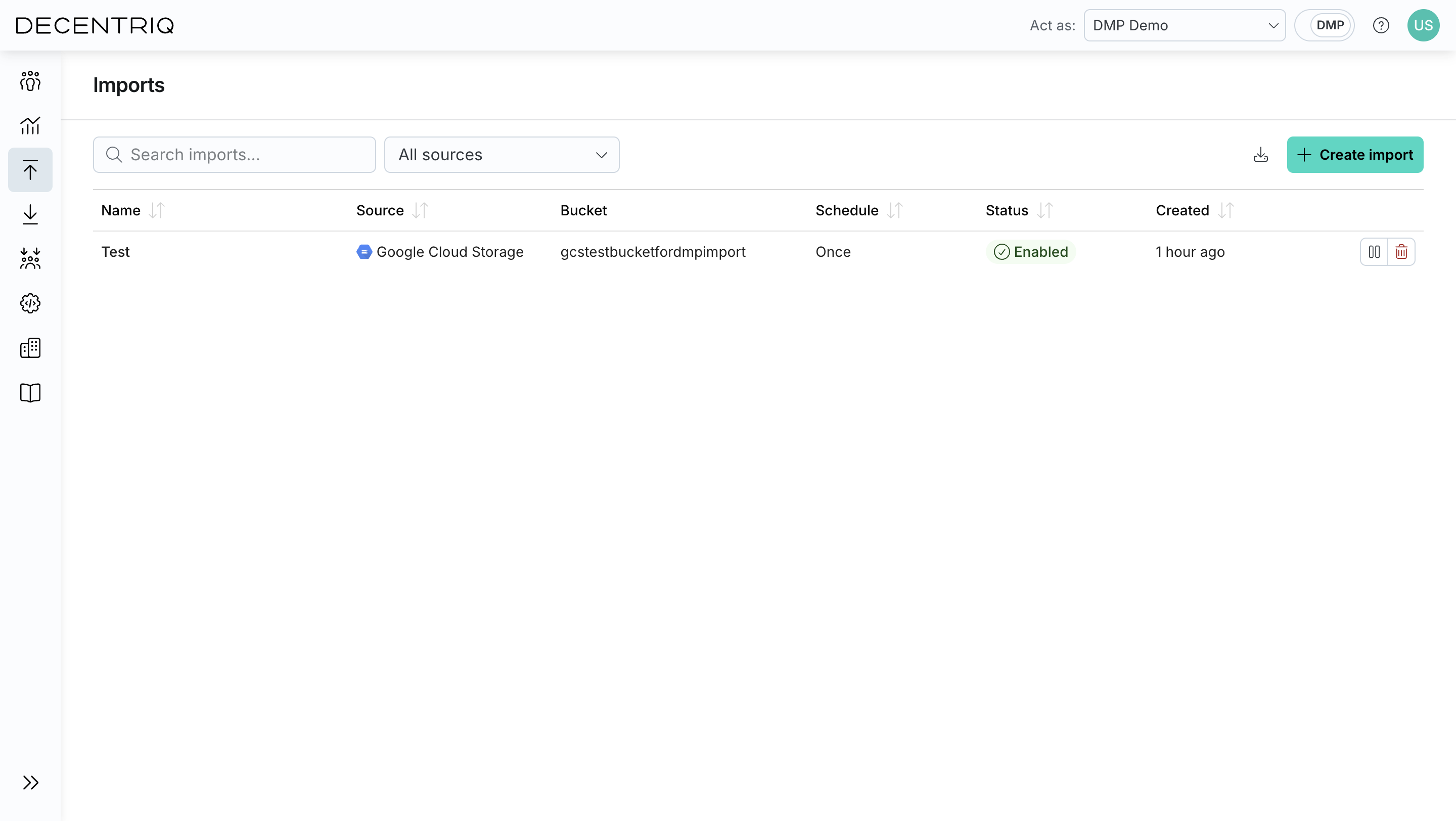

Step 7: Save the import

Click Create to create the import. The import then appears in the imports table with the name provided in the previous step.